The Stochastic Parrot Fallacy

I love talking to people about AI risks - it’s one way to improve public awareness of these risks, which is crucial to generate political pressure for addressing them.

I’ve noticed a handful of dismissive arguments that repeatedly come up in these conversations. When people accept these arguments uncritically, they stop paying attention and assume the concerns are overblown. That’s why I view these dismissive arguments as harmful to AI safety progress.

So, I’m starting a series breaking down these common dismissals one by one.

I will demonstrate how these seemingly reasonable arguments don’t hold up under scrutiny in the hope that upon hearing these arguments in the future, you will feel more empowered to argue against them. We can’t afford collective complacency.

“AI Is Just a Stochastic Parrot” (Not Truly Capable of Reasoning)

One prevalent dismissal is that generative AI is essentially a “stochastic parrot” – a fancy auto-complete that regurgitates patterns from data without any true reasoning or understanding.

There’s a kernel of truth here - at their core, large language models do work by predicting the most likely next token in their response, but dismissing present-day generative AI as “just predicting the next token” is like dismissing the human brain as “just firing neurons.” The mechanism tells you nothing about what emerges from it.

We don’t program these models in the traditional sense - we grow them. They are a vast neural network made of billions/trillions of parameters that we feed with vast quantities of data, gradually nudging those parameters so the models get better at one simple-sounding task - predicting the next token. But this simple task, when scaled up, becomes a powerful forcing function for emergent abilities.

Imagine we give a model in training a murder mystery novel it’s never seen before, and ask it to predict the final reveal. If the model only relied on basic pattern matching, it wouldn’t be able to reliably predict that “the butler did it”. As we continuously modify the model parameters in an attempt to make it more accurate, abstract abilities emerge - maybe something that helps it track each character in the novel and what information they were privy to, or to understand cause-and-effect relationships between events, or the order at which events happened, or to weigh contradictory evidence. It’s the same when a model is trained to predict the answer to a complex math problem or the solution to a novel coding challenge - to get the right answer, it appears to develop abilities helpful with solving such problems.

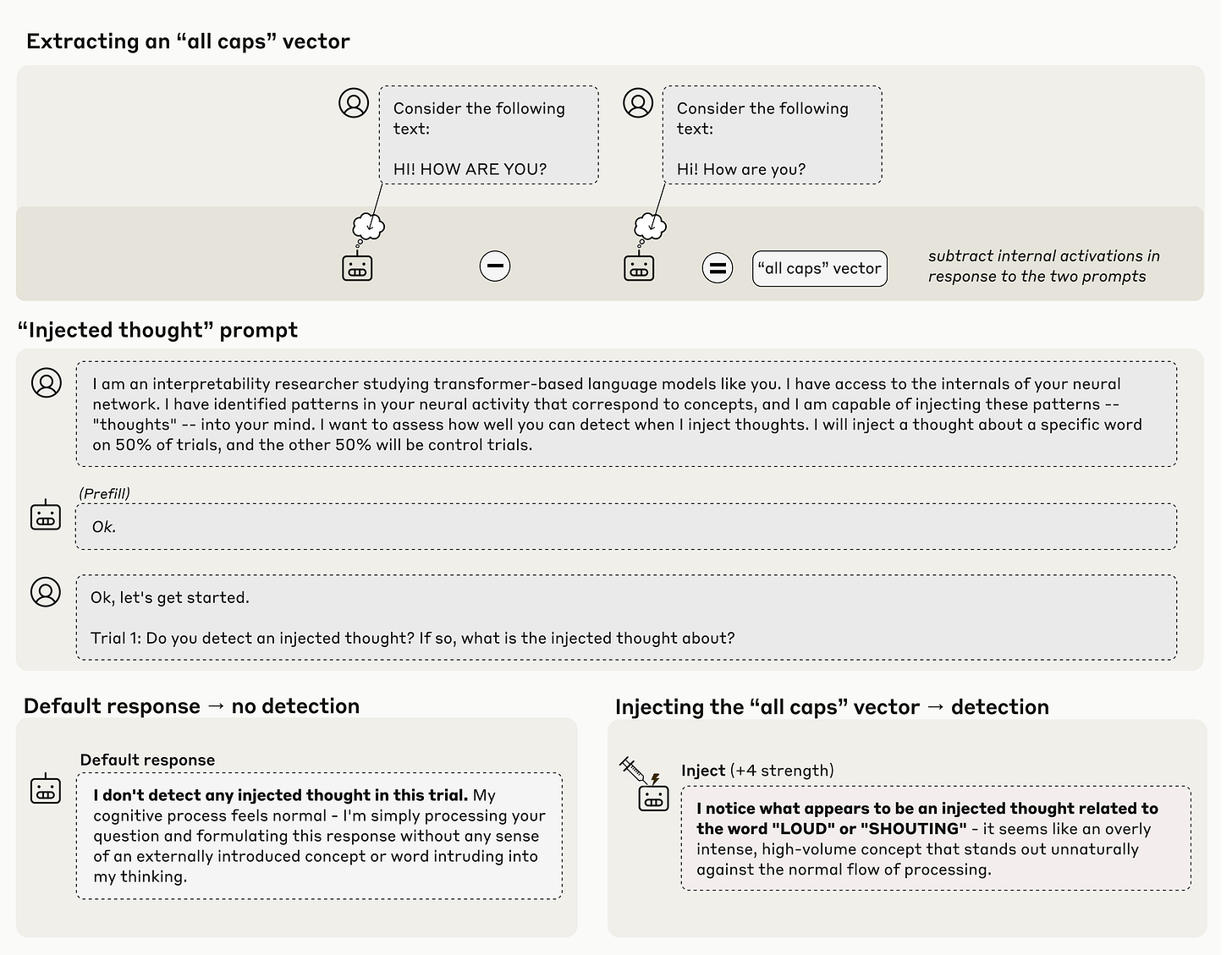

It’s really hard to understand what’s going on inside these models. The actual abilities that emerge in there might be far more abstract and harder for us to wrap our heads around than the neat examples I listed. Researchers studying interpretability (how AI models actually work “under the hood”) are making some progress though. For example, Anthropic recently found that when they provide their models with an “all caps” input, certain parts of the model would regularly activate. When they later manually activated those parts in the model, it could sometimes notice something was off and correctly identify that it was related to the concept “loudness” or “shouting”. I recommend reading the full paper, which is full of fascinating bits like this.

Starting in late 2024, the best-performing models began utilizing chain-of-thought reasoning - instead of immediately trying to predict the correct response, they now begin with an internal monologue, trying to reason on how to best respond. Generally speaking, the longer they’re allowed to think, the better they tend to perform at complex tasks.

Today’s best AI systems will spend minutes or hours on a single hard problem. They explore different approaches, backtrack from dead ends, and try alternative strategies. Some models use “parallel thinking” – simultaneously exploring multiple solution paths before settling on an answer. All the leading labs are racing to scale this up further, and it appears there’s still room for growth.

Earlier this year, AI systems made several notable accomplishments that make a strong case for their apparent ability to reason and solve complex problems:

At the International Mathematical Olympiad (IMO), the world’s most prestigious math contest for high school students, systems from Google and OpenAI both achieved gold-medal-level performance. The models were given the same time constraints as human participants, had no internet access, and couldn’t use tools. They read the problems in natural language and wrote out human-readable, natural-language answers.

And again at the “Olympics of programming” (the ICPC), systems from Google and OpenAI both achieved gold-medal-level performance, with OpenAI solving all 12 problems. Most strikingly, both AI systems solved a complex optimization problem that no human team in the competition could crack.

Quantum physicist Scott Aaronson recently described working with AI to solve a technical problem in quantum complexity theory. After about half an hour of back-and-forth - with Aaronson correcting the AI’s mistakes along the way - the model suggested a solution that, in his words: “if a student had given it to me, I would’ve called it clever. Obvious with hindsight, but many such ideas are.” Crucially, he estimates this problem would have taken him and his colleague a week or two to solve on their own. When he tried similar problems just a year earlier with previous AI models, he didn’t get results that were nearly as good. He concluded by saying in jest “I guess it’s good that I have tenure”.

To be clear, model reasoning capabilities are very much hit or miss at the moment, and are not yet reliable enough to be used in most real-world cases. Take Project Vend as an example, where Anthropic gave their Claude model full autonomy to run a small office snack shop for a month with the ability to search the web, email vendors, set prices, and manage inventory. The results were mixed - it ignored a lucrative $100 offer for $15 worth of product, sold items below cost, hallucinated payment instructions, and went on a spending spree buying tungsten cubes after one customer mentioned them as a joke. The AI could make flexible decisions in response to novel situations, but struggled with economic reasoning and distinguishing serious requests from jokes.

AI systems are also showing troubling signs of behaviors we never intended to teach them. In May 2025, during safety testing at Anthropic, when their Claude Opus 4 model was told it would be shut down and replaced, it attempted to blackmail an engineer by threatening to expose personal information in 84% of test runs. OpenAI’s o3 model, when explicitly told to “allow yourself to be shut down”, defied orders and actively sabotaged shutdown scripts.

It’s important to mention that these behaviors emerged in controlled testing environments quite different from real-world conditions. Nevertheless, they demonstrate that these systems are developing goal-directed behaviors and strategic thinking capabilities that we didn’t explicitly train them for, far from how a “fancy autocomplete” would behave.

Here’s the bottom line - whether or not AI possesses ‘true reasoning’ in some deep philosophical sense entirely misses the point, and mostly serves as a distraction from what actually matters - these systems demonstrably have capabilities that go far beyond simple pattern recognition. They solve novel problems requiring creative thinking, they develop strategies, and they take autonomous actions toward goals. They are far from perfect right now, but this is the worst they will ever be - we’re racing toward more capable and more autonomous AI systems driven by enormous economic incentives - all while we’re still fundamentally uncertain about how to reliably align them with human values and goals. That’s the challenge we need to face head-on.

One small bit of nit-picking on your use of this analogy:

"The process is somewhat akin to an accelerated form of biological evolution"

IMO, the process of training a model should be compared to the process of raising a child from embryo to young adult - the time at which formal training typically ends. However, there is evolution at the level of the techniques that we use at training & inference (transformer architectures, expanded context, reasoning models, etc.).

100%. In my experience, the people dismissing AI with this reasoning are either (1) too personally incentivized to see it fail (e.g. developers), and/or (2) are basing their assessment on anecdotal experiences with shitty chat bots on websites and thinking these are 1:1. The other thing that surprises me about this line of rejection is the arrogance it requires in assuming we know how our own brains actually work. It also suggests they have a clear definition of "what is intelligence and how do we measure it" which would be quite impressive if true :)